One of the abiding images of the coronavirus (COVID-19) outbreak in the UK has been the Prime Minister, Boris Johnson, looking nervous and uncomfortable, flanked by his scientific advisors at the regular press conferences. With three white men in suits in a wood-panelled room, the aim presumably was to project a sense of control and authority. The rhetoric – that the government’s response was always ‘led by the science’ – was reinforced.

A number of uncertainties

Certainly, the UK government’s advisers have been calm, clear and convincing, articulating positions effectively across the media. But soon debates in the UK emerged about the advisability of cancelling mass gatherings, isolating at-risk groups and school closures. The UK position was seemingly out of kilter with other ‘science-led’ policies elsewhere. ‘The science’ suddenly did not seem so singular and certain, as competing scientific views confronted each other.

As all scientists involved know, things are very uncertain indeed. Defining a policy is a judgement call, one based on assessing many competing positions. Indeed, many of the decisions are currently being made in the realm of ignorance – where the outcomes and their likelihoods are simply not known. The list of uncertainties are huge: we don’t know the incubation period, nor the infectivity before symptoms. We don’t know the seasonal dimensions of the disease. We have little clue as to the specificity of the disease for certain population groups. We have no idea whether re-infection can occur and whether herd immunity is possible. We don’t even know the crucial figures for epidemiology – the R0 number (the number of onward infections from a single case) or the mortality rate – or only vast possible ranges.

As Graham Medley, professor of infectious disease modelling at the London School of Hygiene and Tropical Medicine, put it recently in a BBC interview, “anyone who says they know what will be happening in six months is lying”. He’s right. So why is there so much store put in ‘the science’, when it’s so plural, contested and uncertain? Should we really be so reliant on ‘the models’ that offer predictions and guide emergency planning?

Relying on models

With the parameters so uncertain, modelling is of course just informed guesswork. It may be very well informed, by those who have much experience, but we should not reify the process, nor rely completely on the results. Models can narrow down expertise, excluding other perspectives, and they can of course also be wildly wrong, as any modeller worth their salt will readily admit.

An excellent case in point was the experience of the 2001 Foot-and-Mouth Disease outbreak in the UK, when a particular model developed by one the teams heavily involved today was used as the basis science advice, ignoring the opinions of farmers, front-line vets and others living in the countryside (including royalty). The result was dubbed ‘carnage by computer’, involving the closing of the countryside and the mass slaughter of animals. There are of course no counterfactuals available to assess whether this was the right move or not, but the narrowness of the science advice has been widely critiqued.

With COVID-19, there are many more models, associated with a more diverse range of scientists (see some links in this Twitter thread). There is debate and deliberation in the emergency planning process between them. This is all healthy and is likely to result in more robust advice. Uncertainties are being acknowledged, and multiple scenarios are on offer. Scientists advise and politicians decide, or so goes the adage.

Is the science broad enough?

But is ‘the science’ – and so the advice – broad enough? Can we learn from the experience of living with a pandemic in ways that open up debate and encourage a more democratic deliberation? This is not easy as the pace is so fast and scale so huge, but it’s an important challenge. Epidemiological ‘compartment models’ are only stories about the world, with disease transmission and impact on populations constructed in terms of mathematical formulae. But there are other stories too, told by different people in different ways, and these may be just as important in understanding and responding to a new disease.

In our studies of responses to the avian influenza outbreak in the mid-2000s, the limits (indeed dangers) of a ‘reductive-aggregative’ risk-based approach to modelling and policy prescription were highlighted. In 2005 high-profile publications of models framed the response around ‘containment at source’, resulting in an array of draconian measures drastically affecting the livelihoods of backyard chicken producers across southeast Asia. Yet there were contending but similarly ‘evidence-based’ possibilities emerging from other assessments, which were more appreciative of context-dependent uncertainty and complexity.

These highlighted, for example, the interaction of viral ecology and genetics (for example, patterns of antigenic shift and drift), transmission mechanisms (such as the role of wild birds, backyard chickens or large poultry factory farms) and impacts (including the consequences on immunocompromised individuals and populations). These suggested alternative narratives about causes and consequences and suggested quite different policy responses to those adopted following the mainstream modelling results.

Learning lessons

While avian influenza did not turn out to be as easily transmitted between humans as COVID-19 clearly is, there are some important lessons from this experience. First among these is the importance of acknowledging uncertainties, opening up debate about possible outcomes and accepting that diverse, plural knowledges and perspectives are important.

In reflecting on the modelling of zoonotic diseases (ones that transfer from animals to humans, just as COVID-19 did) more generally, a few years ago a group of us (including mathematical modellers, anthropologists and participatory field practitioners) made the case that a plural approach to modelling is required that combines 3Ps in a modelling approach: process (the way disease population dynamics work), pattern (the spatial spread of disease and the correlation with various factors) and participation (understanding disease dynamics from local people’s perspectives).

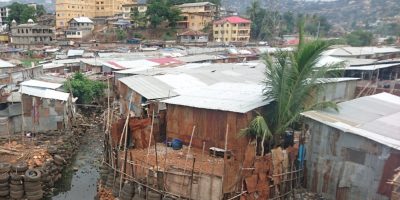

The latter, almost completely absent from the current COVID-19 response, is especially crucial as it can help improve the parameterisation of other models, but more importantly can help root findings from model scenarios in local contexts, with more likelihood that insights are taken up. As we learned from the Ebola crisis in West Africa, without linking disease response to local practical knowledges and cultural understandings, there is little chance of success. This is much more than ‘behavioural science’ and ‘nudge theory’ messaging designed by experts, and requires engagement with those affected by and living with the disease.

For, in the end, pandemics are best defeated through local forms of solidarity, mutual aid and innovation grounded in particular settings, and the scientific models and emergency plans must work with such processes. Disease responses may be informed by science (or rather multiple sciences), but they must be led by people.

This blog originally appeared on the Institute of Development Studies website.